Introduction

Astera Labs is one of the clearest ways I know to invest in the physical limits of AI data centers. Most of the narrative still lives with the GPU giants like Nvidia. Yet the systems that decide how fast data actually moves between those chips are just as important. That is exactly the layer where Astera operates.

In simple terms, Astera designs connectivity chips that sit between GPUs, CPUs and memory in cloud and AI data centers. They do not train models, they do not run apps. They build the 'plumbing' and traffic control that lets all of that compute run as efficiently as possible.

In this deep dive I will unpack why I believe Astera is such an interesting company that's worth your attention. I will use analogies, visuals and real world examples so that even if you have no technical background, you can still understand what Astera does, why it matters and how I think about the trade off between quality and price.

With that, let's dive in.

1 - Highlights

If you only remember a few things about Astera Labs, I would pick these:

- Data centers do not function without the technology that Astera provides. Their connectivity 1 chips are essential for the scale-up and scale-out of modern data centers

- They are founder-led, with an engineer-heavy culture, fully focused on cloud and AI infrastructure

- Astera scaled revenue from $79 million in 2022 to $723 million in 2025 with a very healthy balance sheet, positive free cash flow and >30% free cash flow margins.

But: you have to be willing to pay up for this business and be comfortable with quite some volatility. I'd categorize this as a high-risk/high-reward play.

2 - Astera's mission

Astera is a highly specialized, 'fabless' 2 designer with one job: remove data, network and memory bottlenecks in hyperscale cloud and AI environments. You can think of Astera as the provider of the communication lines between the chips powering AI. They are not a 'nice to have'; data centers would not function without them.

Management describes their mission as:

“Innovating and delivering semiconductor-based connectivity solutions that unleash the full potential of cloud and AI infrastructure.”

There are a few important questions to answer though:

- How durable is the problem they solve?

- How defensible is their position?

- How concentrated is their revenue?

- How much of their growth and quality is already priced in?

- Is Astera, all things considered, worth investing in?

This deep dive will cover all these topics and more, but let’s start with their founding story.

3 - Founding story and contrarian thesis

Astera Labs was founded in 2017 by three former Texas Instruments engineers. The CEO, Jitendra Mohan, and his co-founders spent years designing extremely fast chips that can communicate clearly in a very “noisy” environment, to put it in simple terms.

The contrarian bet was that connectivity itself would become a first-class design problem in the cloud era. Instead of treating it as a side effect of CPUs and GPUs, there was room for a standalone company that focused entirely on the communication layer that sits between processing units.

That focus shows up everywhere in their portfolio today. They do not build GPUs. They do not build CPUs. They build products that sit between them and make the whole system work at massive scale.

Astera's timeline

2017

Astera Labs is founded in Santa Clara. From day one the idea is simple. Do not build the big chips. Fix the traffic between them. Modern data centers are drowning in data, so Astera chooses to focus purely on how that data moves.

2018 to 2022

In the next few years, the team ships its first connectivity chips and wins early data center customers. They pick TSMC as their main manufacturing partner and add products that move data reliably over very fast network links inside the data center racks and across the data center. In 2022, Astera raises a large funding round at a multi-billion valuation and leans into “CXL”, a new way to share memory between servers.

2023

They open a large interoperability lab to test Astera chips with hardware from all the major compute and memory vendors (think AMD, NVIDIA, Intel etc.). Revenue passes $100 million and the company starts to look like a real AI infrastructure player, not just a niche startup.

2024

Astera goes public on Nasdaq under the ticker ALAB, launches new generations of its connectivity chips and introduces Scorpio, a “traffic controller” chip for AI servers. It also expands its research and lab footprint closer to key customers.

Today

All four product lines now contribute to revenue, with Aries being the core engine and Leo and Scorpio ramping. Growth is strong, and Astera is becoming a scaled public business. Its memory connectivity chips begin to show up in public cloud offerings, putting the company inside the next generation of AI data centers.

Now that we know the founding story, it's time to take a closer look at the problem they actually solve and the products they've launched to address these.

4 - The problem they solve and why it matters

4.1 - The problem

Modern AI data centers face three related bottlenecks: data, network and memory. The simple analogy here is a busy airport. In this analogy:

- GPUs are the planes

- Data and memory are the passengers and luggage

- Connectivity chips are the runways, gates and taxiways

You can buy more planes. But if the runways are short, the taxiways are congested and the gates cannot handle the flow, the overall system still underperforms.

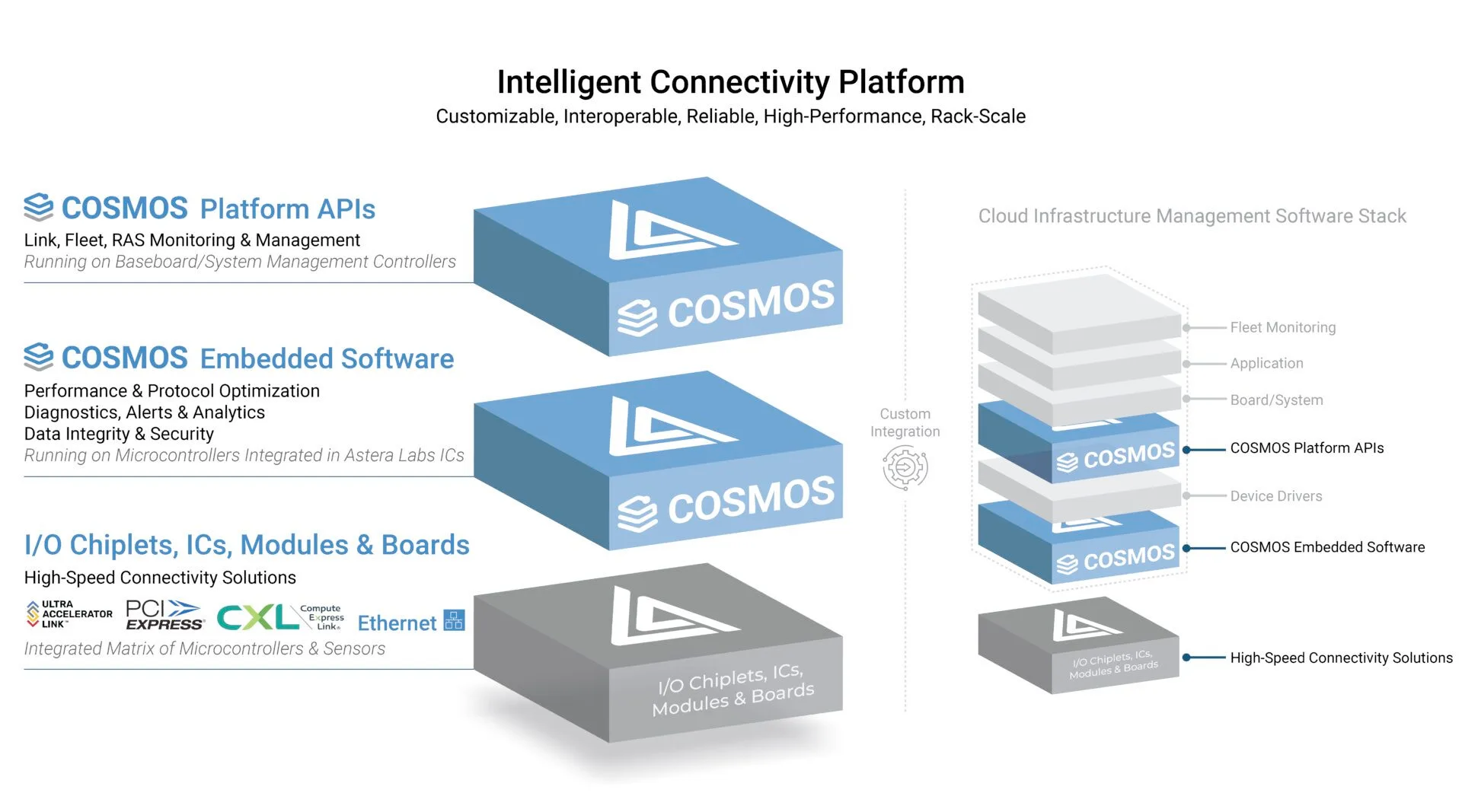

Astera positions its “Intelligent Connectivity Platform” precisely at these pain points. The platform combines high speed connectivity products with an embedded software suite (COSMOS) that configures, monitors and optimizes those links in real time.

Astera is focused on designing, building and running the entire airport ground infrastructure and control system, connecting all these different parts to make sure they operate as effectively as possible.

4.2 - Why it matters

Imagine you’re a CEO and you’ve decided to spend billions on creating an AI cluster, but you only get 70% of the theoretical performance because of bottlenecks. This dramatically increases the cost per unit of compute, directly eating into your margins.

With their product line-up, Astera sells a way to maximize the returns on these AI expenditures. That is a very strategic value proposition in this current AI cycle which is characterized by its massive investments.

Another important topic is vendor lock-in. If you can deploy vendor-neutral connectivity that works across GPUs, CPUs and accelerators from different suppliers, you reduce lock-in and increase negotiating power. Which in a world where NVIDIA has sky-high margins, is definitely worth consideration.

With that being said, let us take a closer look at what their product line-up is exactly.

4.3 - A closer look at their products

Normally I would rank the product line-up based on the revenue split per line. Since Astera does not disclose revenue by product line, the ranking below reflects my best judgment based on filings and management commentary.

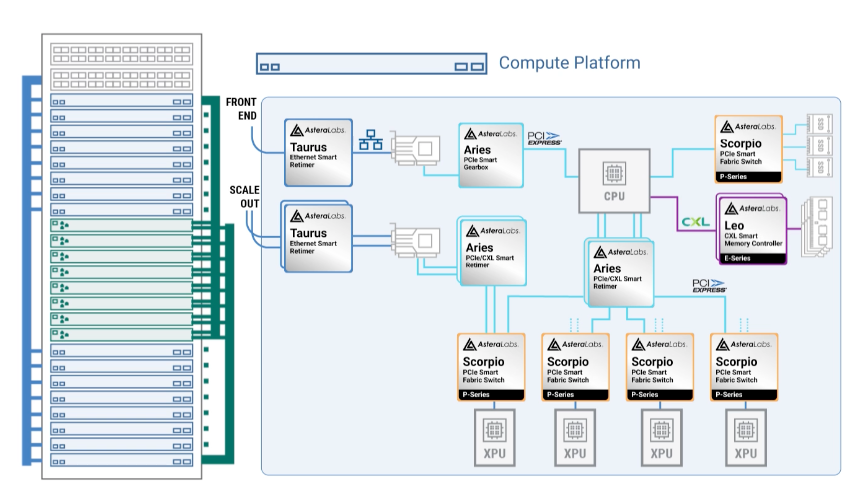

1 - Aries

It’s the core engine in Astera’s product line. This family of chips is tasked with solving an important problem: when processors communicate with speeds pushed higher and distances longer, the signal starts to fall apart. It’s like running a high-speed train on an old track that was never designed for it.

Aries ensures smooth and noise-free traffic. It ensures that GPUs, CPUs and storage can talk at extreme speeds without constant errors so that the AI stack running on these clusters performs as efficiently as possible.

2 - Taurus

Think of Taurus as the smart cables between servers and other modules. The problem that Taurus solves: as you push more traffic through these cables, the signal can get fragile. Taurus is the smart module inside cables that boosts or cleans signals if needed and monitors how healthy a signal is in real time.

Back to the analogy: Taurus is like smart shuttle buses on a large airport that constantly adjust routes and speed to avoid traffic jams and keep a live dashboard for the operations center.

3 - Leo

Leo is all about storage. Today, each server has its own fixed memory, like each gate having its own tiny baggage room which works up to a point. But with AI training and large models there’s a need for more and more memory, and they want to use it flexibly. Leo is basically building large shared storage wings that they can tap into when needed.

It’s still in its early stages and revenue is ramping, but it’s addressing an important memory bottleneck.

4 - Scorpio

At a high level, Scorpio is Astera’s fabric switch for AI servers. A fabric switch is the traffic controller for all the connections inside a server or rack. It’s connecting GPUs as efficiently as possible and making sure there are no bottlenecks.

5 - COSMOS

The software layer that functions as a control room, providing oversight of everything going on. It uses the data from Aries, Taurus, Leo and Scorpio, to track whether their signals are clear, throughput is high and to drill down into problematic connections if needed.

It transforms all the independent chips and wires into something that can operate like a system. It likely does not move the revenue needle on its own but adds great value through the visibility it provides to operators, making the overall product suite more stickier as a whole.

In short

Aries and Taurus are about keeping individual links clean, Leo is about shared memory, and Scorpio plus COSMOS are about orchestrating everything as one system.

On their website, Astera has a neat visual representation of how all these parts work together, which is worth a look as well.

Now that we have an idea of what Astera does and what they provide, it’s interesting to look at how they position themselves against competitors. A topic that is especially relevant for Astera.

5 - Industry positioning and ecosystem map

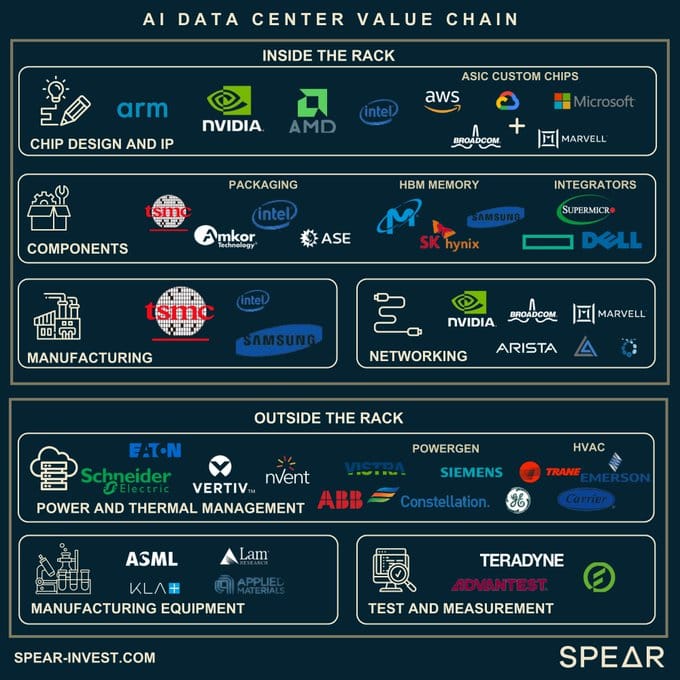

5.1 - Where Astera sits in the stack

If you look at the visual below, there’s a distinction between those inside and outside the rack 3.

Inside the rack you find companies like NVIDIA, AMD and Intel for the compute. Micron and SK Hynix for memory. And NVIDIA, Broadcom and Credo in networking.

Outside the rack you have companies like ASML and TSMC that build the tools and manufacture the chips.

Astera sits snugly inside the rack that connects all this together. They are the connectivity glue so that GPUs, CPUs, memory and networks from all those logos can actually run at full throttle together.

5.2 - Core competitors and relative positioning

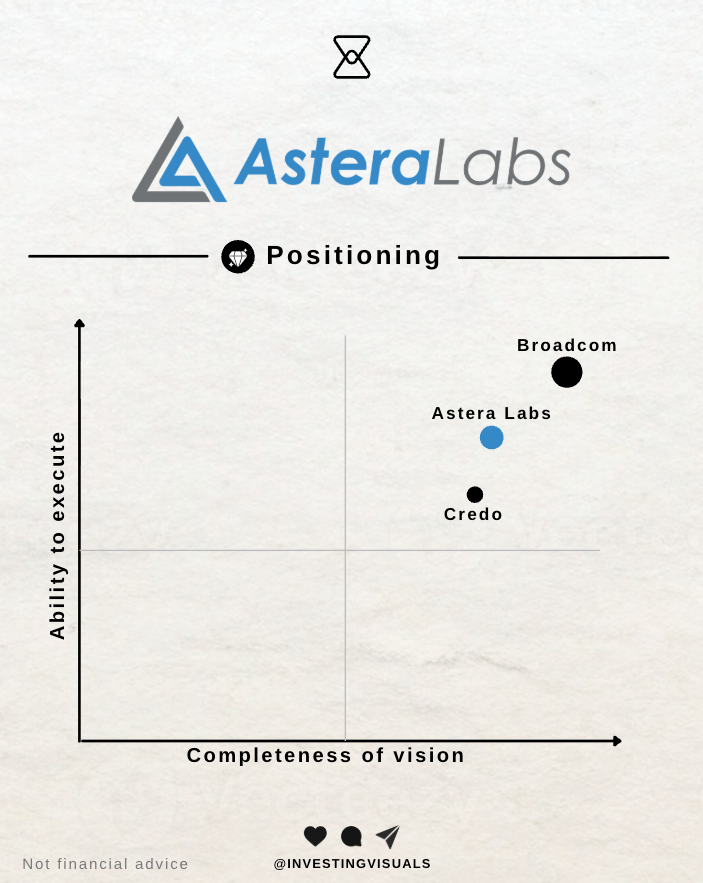

A great way to assess competitors is Gartner's Magic Quadrant. Because Astera doesn’t show up in any of the quadrants due to their relatively small scale, my rough placement would be as follows:

I’d place Broadcom in the top right corner as the incumbent with massive scale and proven execution. Credo builds chips and smart cables that keep data signals clean as they travel between servers and between racks. Astera does that too, but combines it with memory connectivity and a control layer on top through products like Scorpio and COSMOS.

When comparing Astera and Credo to Broadcom, it is good to note that they are playing different games.

Astera and Credo are the specialists, basically the nervous system inside the AI rack, very close to the GPUs. Astera is the one with a more complete product portfolio compared to Credo. Broadcom on the other hand is the widely diversified connectivity giant.

In this large and expanding market, I believe there is room for all three players to do well, but more on that in section 9 - Total addressable market. Before we go there, it's important to first discuss a vital part of Astera's value proposition: their partner network.

5.3 - Partnership network

A strong partner network isn’t just a nice to have when you’re creating products that must be interoperable 4 between multiple vendors.

According to their 10-Q, Astera has “trusted relationships with the leading hyperscalers” such as Microsoft, Amazon, Google and NVIDIA. Astera has very tight integrations with both CPU and GPU vendors and their Scorpio “Operator software suite” is, for example, plugged into NVIDIA’s NVLink.

If you want to be the player that ties it all together, you’ve got to make sure your products are compatible with all these vendors. This is where their “Interop Lab” comes in, short for “Interoperability” (how creative!).

With the ever-growing complexities in AI, their Interop Lab ensures seamless integration and scalability across different vendors. Astera highlights the three key benefits below, which I will touch upon again when discussing their competitive advantages.

Subscribe to continue reading