Introduction

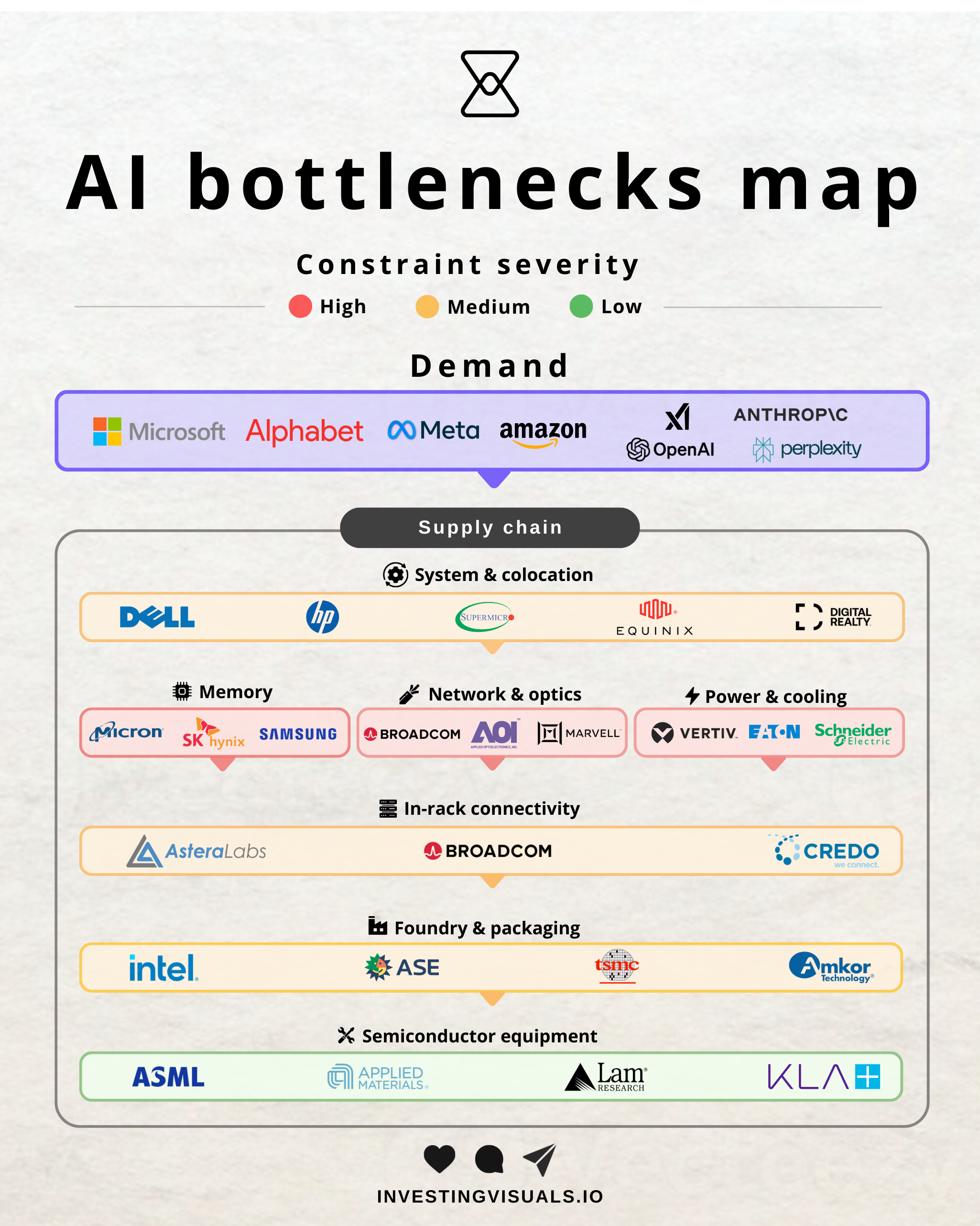

My earlier research piece on AI supply chain bottlenecks sparked my interest in the memory category. And once I started pulling at that thread and dive deeper, I found something that surprised me. The High Bandwidth Memory (HBM) market is projected to grow from roughly $35 billion in 2025 to $100 billion by 2028, a ~40% compound annual growth rate.

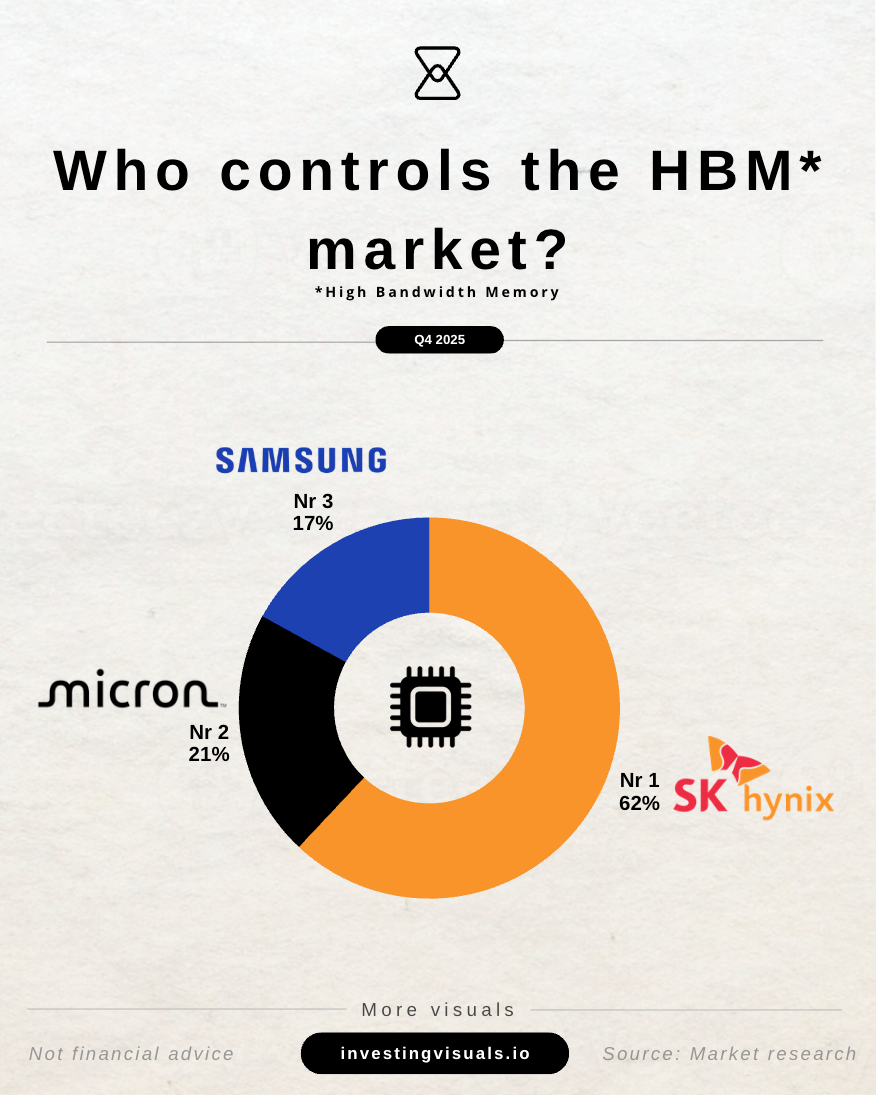

Some forecasts suggest HBM alone will surpass the entire DRAM market of 2024 by that point. Right now, HBM is sold out through 2026 across all three major suppliers. And this segment is basically an oligopoly between SK Hynix, Micron, and Samsung.

That combination of explosive demand, physically constrained supply, and a concentrated market structure is what made me want to go deeper into this segment.

1 - Why memory became the constraint

For most of the last thirty years, memory was a commodity business. Prices moved in very predictable cycles. Producers built capacity, oversupply hit, prices dropped, stocks came down with them. Rinse and repeat. Samsung, SK Hynix, and Micron between them control over 95% of global DRAM production, which helped stabilize the worst of the volatility, but the cycle itself never disappeared. Investors who lived through 2022 and 2023, when all three were reporting operating losses measured in billions, learned that lesson the hard way.

That cyclical nature is now being tested by something qualitatively different from every prior memory cycle.

The rise of large language models and AI inference at scale has created a specific, technically insoluble demand problem. Modern AI workloads are fundamentally memory bound, not compute bound. GPU performance is bottlenecked by data transfer through memory, with HBM bandwidth being the primary governor of effective throughput.

For context: DRAM is the primary high speed temporary memory used in computers and electronics to store data for active applications. HBM, or High Bandwidth Memory, is a specialized form of DRAM designed for high performance tasks like AI and advanced graphics. Think of it as a skyscraper of memory chips stacked right next to the processor.

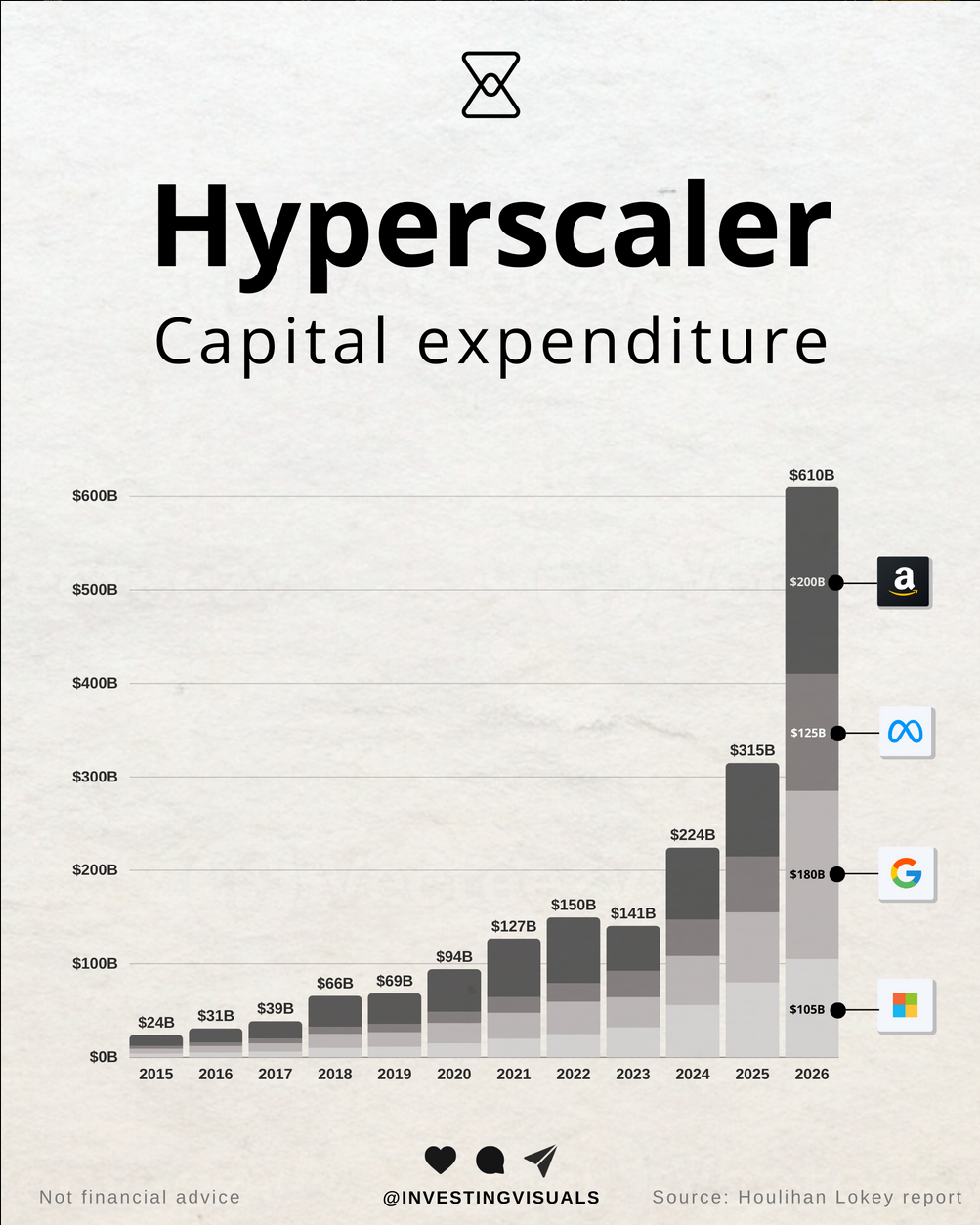

What makes this demand curve different from anything I've seen before is who's buying. Hyperscalers are price insensitive (at least right now). They need the memory because without it their GPU clusters don't run at full utilization, and a billion dollar data center idling at 40% is a far more expensive outcome than paying a 30% premium for HBM. Morgan Stanley described this shift explicitly in a November 2025 research note:

"The core driver of memory demand has shifted from price-sensitive traditional customers to price-insensitive AI data centers and cloud service providers."

The numbers: Gartner projected data center systems spending at $653.4 billion for 2026, up 31.7% from $496.2 billion in 2025. Hyperscaler capex has been revised upward every single quarter for two years running. HBM accounted for at least 30% of total DRAM revenue by 2025, and that share is still rising. ABI Research describes AI infrastructure as "triggering a structural reallocation of global memory manufacturing capacity, creating a shortage unlikely to resolve before 2027."

That raises a question I keep coming back to.

2 - Is this still a cycle?

AI isn't like anything the memory industry has encountered before, so it feels illogical to apply the old cyclicality framework to it. But I've learned to be careful with "this time is different" thinking. Getting this question right determines how I look at the entire memory industry and the durability of the investment thesis.

The structural case

The primary argument for structural dynamics rests on a physics constraint. Building a leading edge DRAM facility costs $15 to $20 billion and takes three to five years from investment decision to scaled output.

Micron's Idaho facility, announced in 2024, will produce its first wafer in mid 2027. SK Hynix's Yongin complex won't complete its first fab until May 2027. Samsung follows a similar timeline. So no meaningful new supply arrives before late 2027 at the earliest.

HBM production adds a second layer of constraint. Manufacturing HBM requires approximately three times the wafer capacity compared to conventional DRAM. Every wafer committed to HBM is pulled away from commodity DRAM, tightening both markets simultaneously. DRAM contract pricing surged nearly 172% year over year as of Q3 2025 as a direct result of this dynamic.

KAIST Professor Kim Jeong-ho summarized it well in April 2026:

"As long as AI continues, the memory business will transform into an industry with minimal ups and downs, similar to foundries."

For context, TSMC's transformation from a cyclical foundry into a structurally scarce infrastructure provider happened when leading edge logic manufacturing consolidated into a single viable producer. HBM is experiencing a version of that same consolidation, just with three players instead of one.

Where cyclicality remains

Every prior memory cycle that appeared structural ultimately proved cyclical. The Windows PC supercycle of 1993 to 1996 drove 4x growth in memory content per device and looked permanent. DRAM prices then collapsed 51% in 1996 and another 65% in 1997. The 2016 to 2018 smartphone driven supercycle also looked different. Memory revenue peaked for Micron at $30.4 billion in FY2018 and had halved to $15.5 billion by FY2023.

The mechanism of reversal shares familiar characteristics: supply inertia means all three producers commit capex based on current demand signals and build simultaneously. New capacity arrives into a market where demand has either moderated or been met, and prices collapse under fixed cost leverage. That pattern is clearly visible in Micron's stock price over the past decades. And it's clear as day that we're in the boom phase right now.

How I think about this

I believe the supply demand imbalance will persist into 2027 and possibly 2028 as AI inference demand grows exponentially. But the traditional risk of a bust when a lot of new capacity arrives simultaneously is still very realistic. The key question is whether demand will outrun new supply. If it does, this cycle extends longer than any prior one.

In my view, the core difference this time is that the floor has moved permanently higher. AI inference is not discretionary spend that evaporates in a recession. It's embedded infrastructure. And as prices fall, demand likely increases. It's the Jevons paradox: the falling cost of using a resource causes demand to rise so much that total consumption increases rather than decreases.

But that doesn't eliminate cyclicality entirely. I think the amplitude of peaks and troughs will compress over time, creating a business that's structurally better than the memory industry of the past but still not immune to oversupply dynamics. Something more like a higher floor with a lower ceiling on volatility.

Which brings me to the question that matters most for the investment case.

3 - The runway for HBM

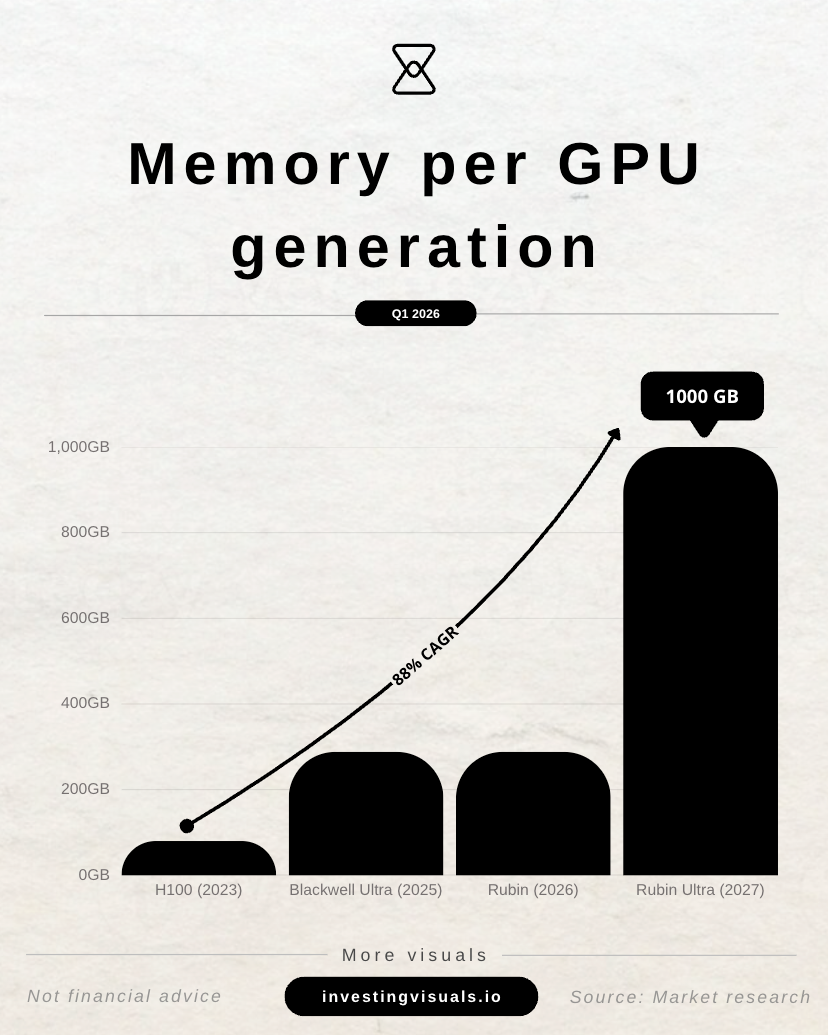

Every new generation of Nvidia's GPU architecture needs more HBM, not less. I believe that is a core part of the memory thesis. What we're seeing is not cyclical demand that peaks and the drops. Demand increases with every product cycle into the foreseeable future.

The progression:

- The H100 (2023) carried 80 GB of HBM

- The B200 Blackwell (2024) carried 192 GB

- The B300 Blackwell Ultra (2025) carries 288 GB

- The Rubin R100 (H2 2026) will carry 288 GB of HBM4 at 13 TB/s bandwidth, a 62.5% jump in bandwidth over B300

- The Rubin Ultra (H2 2027) will carry 1 TB of HBM4E across 16 stacks at an extraordinary 32 TB/s

- The Feynman architecture (2028) is confirmed to use next generation HBM, likely HBM5

From H100 to Rubin Ultra in four years, HBM capacity per chip goes up roughly 12x. Every time Nvidia ships another rack of GPUs, the memory inside that rack is meaningfully larger than the last one.

And it's not just Nvidia. AMD's MI400 will pack 432 GB of HBM4 per GPU, 50% more than both the Nvidia Rubin R100 and the previous MI350. AMD is deliberately betting on memory density as its competitive edge. CEO Lisa Su stated the company will not be supply limited on its MI400 ramp, meaning AMD has secured its own multi year HBM supply agreements, pulling additional volume away from a market that is already sold out.

The HBM demand roadmap

NVIDIA

| Year | Nvidia Platform | HBM Generation | HBM per GPU | Bandwidth |

|---|---|---|---|---|

| 2023-2024 | H100 / H200 | HBM3 / HBM3E | 80-141 GB | 3.35-4 TB/s |

| 2025 | B300 Blackwell Ultra | HBM3E | 288 GB | 8 TB/s |

| H2 2026 | Rubin R100 | HBM4 | 288 GB | 13 TB/s |

| H2 2027 | Rubin Ultra | HBM4E | 1 TB | 32 TB/s |

| 2028 | Feynman | HBM5 (likely) | TBD | TBD |

From H100 to Rubin Ultra the HBM per chip goes up roughly 12x. Each generation brings a new supply contract, a higher price, and a tighter squeeze on available fab capacity.

AMD

| Year | Platform | HBM Gen | HBM per GPU | Bandwidth |

|---|---|---|---|---|

| 2024-25 | MI300X / MI325X | HBM3 / HBM3E | 192-288 GB | 5.2-8 TB/s |

| Q3 2026 | MI400 / MI455X (Helios) | HBM4 | 432 GB | 19.6 TB/s |

| 2027 | MI500 | HBM4E | TBD | TBD |

Counterpoint Research forecasts HBM demand for AI compute to grow 35x from 2024 to 2028. UBS forecasts HBM bit shipments jumping 35% in 2026 alone, with blended pricing per bit rising 18.5% year on year. Micron itself said it can only meet roughly 60% of its 2026 HBM demand. The other 40% simply goes unfilled, flowing into 2027 and beyond.

I also think the demand coming from hyperscaler custom silicon is underappreciated. HBM demand for AI compute ASICs (custom chips like Google's TPUs) is expected to grow 35x between 2024 and 2028, with average per chip HBM density projected to surge almost 5x by 2028 as hyperscalers hit the same memory bandwidth ceiling GPU customers hit before them.

The supply side can't keep pace with this. And the market is controlled by just three companies with formidable barriers to entry. Time to look at who they are.

4 - The oligopoly and its moat

The current market structure in global DRAM production is one of the most concentrated in any major industry on Earth. Samsung, SK Hynix, and Micron collectively control approximately 95% of global DRAM output. Within HBM specifically, SK Hynix holds approximately 62% market share, Micron ~21%, and Samsung ~17%. This is not a recent development though. It's the outcome of fifty years of brutal competitive selection.

| Company | Global DRAM Share | HBM Market Share | HBM Technology Status |

|---|---|---|---|

| Samsung | ~37–38% | ~17–22% | HBM4 production began Feb 2026 |

| SK Hynix | ~36% | ~50–62% | HBM3E dominant; HBM4 ramping |

| Micron | ~22–24% | ~21–25% | HBM4 in high-volume production Mar 2026 |

Having done a master in Business Administration, I'm a big fan of organizational theory, which identifies barriers to entry as the primary mechanism that sustains oligopolistic structures. In memory, I think the moat is best understood through four overlapping layers, each reinforcing the others:

Layer 1: Process learning and yield curves

This is the deepest and in my view most underappreciated layer. Semiconductor manufacturing costs decline systematically with cumulative volume, but the knowledge embedded in yield improvement isn't transferable. It lives in processes, in the tacit expertise of thousands of engineers, in years of learning about tool maintenance, clean room protocols, and wafer handling at specific temperature and humidity ranges. SK Hynix's HBM3E yields of 80 to 90% on 12 high stacks were not purchased. They were earned through millions of wafers and years of failure analysis. The yield gap between leaders and followers in each HBM generation has historically been two to four years wide, and each generation is increasingly complex.

You can't buy your way through this with a big bag of money. That's what makes it so powerful.

Layer 2: ASML as chokepoint

Beneath the process learning layer sits a second order moat of comparable power. ASML is the sole producer of extreme ultraviolet lithography machines in the world. Leading edge DRAM production below the 1y/1z node, and all HBM4 and HBM4E production at scale, requires EUV. ASML's order backlog stood at €38.8 billion at the end of 2025, with delivery slots constrained years in advance.

Here's where it gets extra interesting: In March 2026, SK Hynix placed the single largest publicly disclosed order in ASML's history: $7.97 billion for EUV scanners to be delivered through December 2027. That's roughly 30 new machines. The company that already leads on process learning is pr-committing the equipment infrastructure needed for the next two generations before rivals have even secured the same allocation. That's a competitive move that compounds an already existing advantage.

Layer 3: Customer co-development and architecture lock in

Qualifying a new HBM supplier requires six to twelve months of joint testing covering thermal behavior, signal integrity, power delivery, and system level integration with the GPU architecture. For Nvidia's Vera Rubin platform, SK Hynix supplies approximately 70% of HBM4, reflecting years of co-development work that goes far deeper than a procurement relationship.

Nvidia's software stack, driver architecture, and thermal management protocols are partially optimized around specific HBM implementations. Replacing a primary supplier mid generation would require re-qualification costing months of engineering time and delaying product launch. At the frontier of AI computing, where each month of deployment delay translates directly into competitive disadvantage and billions in lost revenue for hyperscalers, that switching cost is enormous.

Layer 4: Capital scale

A new entrant would need to finance a $15 to $20 billion fab, staff it with engineers who essentially don't exist outside the three incumbents, ramp yields on a technology generation that will already be obsolete by the time the fab comes online, and do all of this while incumbents are simultaneously moving to the next node. The economics are specifically structured so that the company with the most cumulative volume has the lowest cost per unit. Any entrant is permanently behind on cost structure until they've shipped billions of units, which requires winning customers they can't win until they have competitive costs.

That circular barrier is near impossible to break. The only challenger capable of spending at this scale is a government backed sovereign program.

Then there is China

CXMT is the most serious emerging challenger. The company doubled revenue to $8 billion in 2025, is targeting HBM3 production at its new Shanghai facility with mass production expected by 2027, and reportedly delivered HBM samples to Huawei for domestic AI accelerators. It's currently three years behind the leading edge, a gap reduced from more than six years in 2020.

But I think there is an important nuance here: export controls prohibiting EUV tool sales mean CXMT is building HBM3 with DUV equipment at yields and densities below the standards required by Nvidia and global cloud providers.

CXMT will almost certainly develop a domestic HBM supply chain over the next three to five years, but it will serve the Chinese domestic market rather than compete for global hyperscaler contracts. At the premium HBM tier where the incumbents earn their margins, the China threat is effectively blocked by the EUV ceiling for the foreseeable investment horizon.

The moat is strongest at the intersection where process learning, equipment access, and customer co-development all reinforce each other in a feedback loop. The only realistic path to moat erosion I can see is a fundamental architectural shift: a computing paradigm where the GPU and HBM co-package model becomes obsolete. Every roadmap projection currently available points in the opposite direction though.

5 - How the three players differ

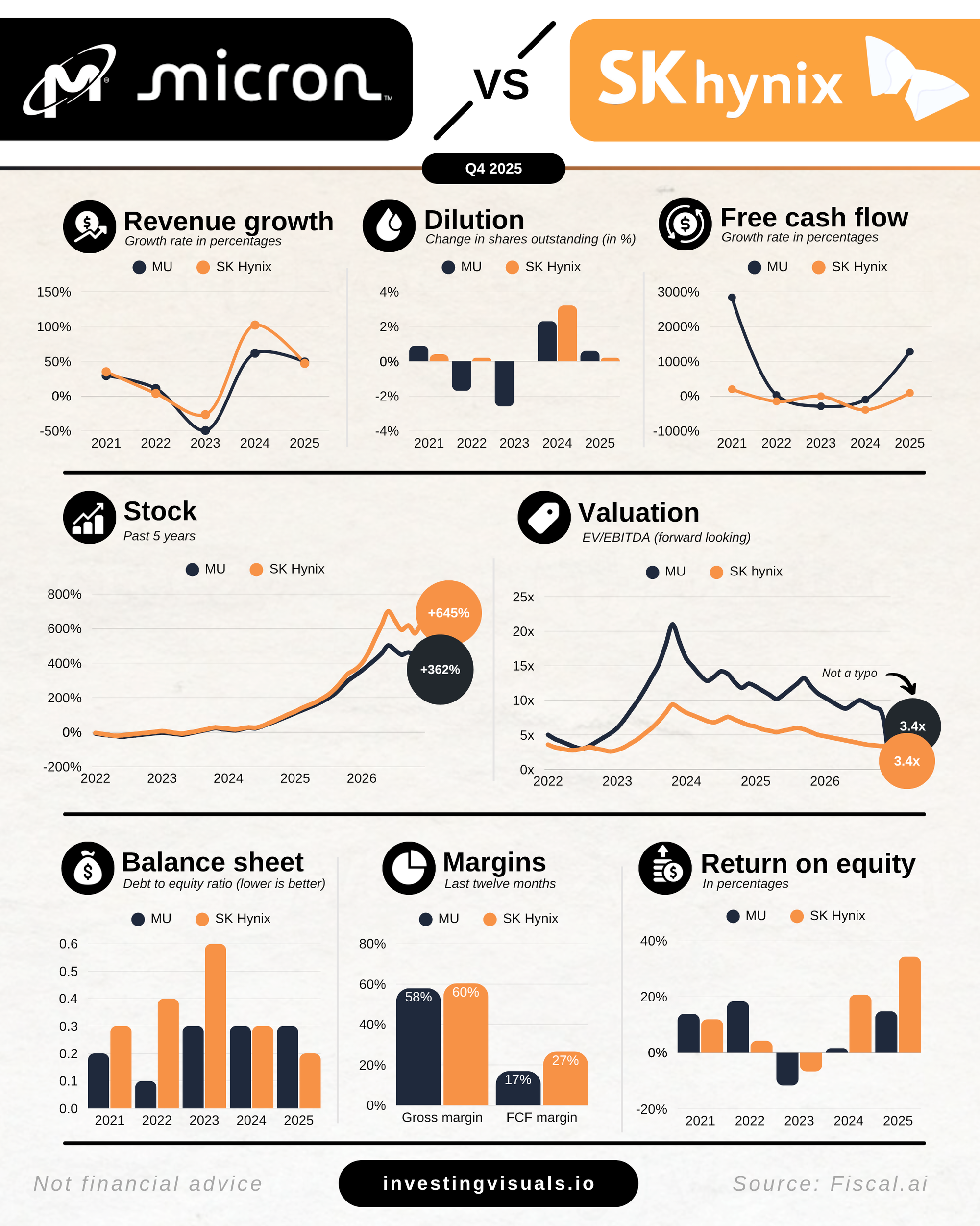

The three companies compete across the same fundamental product categories (DRAM, NAND flash, and HBM), but their strategic positioning, technical architecture, and business models diverge considerably.

On the technical side, the differences are meaningful.

- SK Hynix uses Mass Reflow Molded Underfill (MR-MUF), a process that bonds all layers simultaneously using a liquid epoxy compound with roughly twice the thermal conductivity of conventional film.

- Samsung uses Thermal Compression Non-Conductive Film (TC-NCF), which bonds each layer individually. MR-MUF produces higher throughput and better thermal dissipation, which is the primary reason SK Hynix achieves 80 to 90% yields on 12 high stacks while Samsung has struggled at lower thresholds.

- Micron takes a differentiated approach, achieving approximately 30% lower power consumption than competitors, a meaningful edge in data centers where power costs are substantial.

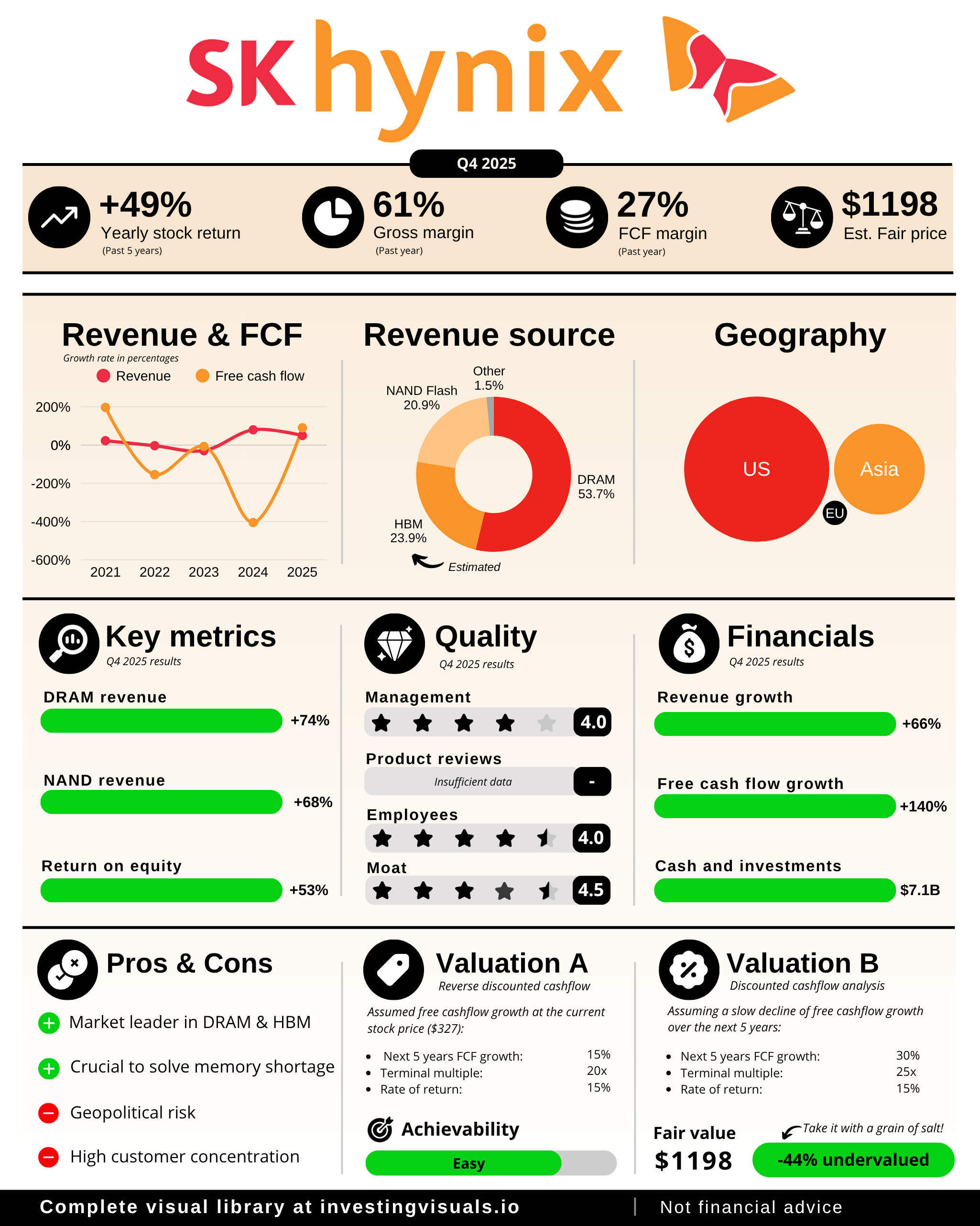

SK Hynix: Highest quality

SK Hynix is the purest memory play. It operates only in memory, with a subsidiary (Solidigm) in enterprise SSDs. All capex, all engineering, all management attention flows into DRAM and HBM.

HBM revenue more than doubled year over year in 2025. Q4 2025 gross margin reached 69%, surpassing TSMC's 62% for the same quarter. That's a massive achievement. TSMC is the most respected semiconductor foundry in the world and consistently trades at premium multiples reflecting its structural moat. And SK Hynix posted a higher margin.

The technological moat in MR-MUF packaging is strong and defensible. Samsung has been studying and reportedly attempting to adopt the technology, and the fact that it hasn't closed the yield gap despite enormous resources confirms that process learning is a real barrier, not just a head start.

A legitimate concern is customer concentration. A single unnamed "major customer" (we all know it's Nvidia) accounted for 23.9% of total global sales in FY2025, equivalent to $17.6 billion. About 90% of HBM revenue flows through that relationship. But Nvidia's roadmap calls for 1 TB of HBM per chip on Rubin Ultra. Even if Nvidia diversified sourcing aggressively, the absolute volume flowing to SK Hynix would still grow. All three players are sold out, so Nvidia has nowhere else to go for the foreseeable future.

The potential ADR listing, with a decision expected around mid 2026, would remove access friction, broaden the shareholder base, and could be a meaningful near term catalyst.

2026 capex is expected at approximately $28 billion, up from ~$19 billion in 2025. Beyond this year, the Yongin cluster alone carries ~$21 billion in committed construction spend through 2030, with a longer term pledge of roughly $400 billion (that's not a typo) to build out the full complex through 2050.

Micron: High quality and most accessible

Micron went from roughly 5% HBM market share two years ago to 21 to 25% today. That's top notch execution and extraordinarily hard to pull off. Its HBM4 is now in high volume production for Vera Rubin, delivering over 2.8 TB/s bandwidth at greater than 20% better power efficiency than the prior generation.

A 74% gross margin for a manufacturing business is really unheard of. In my view, the geopolitical dimension is underappreciated. In a world where the U.S. government has made semiconductor supply chain security a national priority, the only U.S. listed pure play DRAM and HBM manufacturer with domestic production capacity backed by billions in CHIPS Act funding has a structural policy tailwind that Korean competitors cannot replicate.

The capex commitment is the primary quality risk. Micron is spending over $25 billion in 2026 on new domestic fabs that won't produce meaningful supply until 2027 and beyond, with FY2027 capex guided to increase by a further $10+ billion. If the memory cycle turns before those fabs are filled, the fixed cost base becomes a significant liability. This applies to SK Hynix and Samsung as well by the way. Memory companies that overbuild historically take multiple years to work through the consequences.

Samsung: Lower quality, but upside on execution

Samsung is a diversified conglomerate spanning memory, foundry, consumer electronics, displays, and home appliances. That diversification dilutes exposure to the memory story because it's part of a massive, multi business holding structure.

The foundry division continues burning capital while losing ground to TSMC. The HBM gap in 2024 and 2025, which resulted in losing Nvidia allocations, wasn't a production problem. It was a yield and qualification problem rooted in an internal organization that struggled with the speed and focus the HBM competition demands.

Samsung's HBM4 commencement for Nvidia in February 2026 is an important qualifying milestone. But it remains to be seen whether they can truly compete with SK Hynix and Micron at the premium tier, or simply execute as a commodity incumbent. Right now, I think their focus is spread too thin to really compete head to head with Micron and SK Hynix.

At 5 to 6x EV/EBITDA, the valuation already prices in considerable disappointment though. Samsung committed $73.3 billion for 2026 in total capex and R&D combined, with roughly $40 billion in pure semiconductor capex, up ~20% year on year. The question is whether the conglomerate structure, the foundry drag, and the HBM execution history justify the discount.

| Dimension | SK Hynix | Micron | Samsung |

|---|---|---|---|

| Business model | Memory only | DRAM + NAND + HBM | Full conglomerate |

| HBM market share | ~62% | ~21–25% | ~17–22% |

| Q4 2025 operating margin | 58% | ~47% adj. | Recovering; below Hynix |

| HBM packaging technology | MR-MUF (proprietary thermal advantage) | TC-NCF; ~30% power efficiency lead | TC-NCF; yield improving |

| Nvidia relationship | Primary supplier (~90% of Nvidia HBM) | Second source, ramping | Reclaiming qualification |

| U.S. listing | No (Korea only) | Yes (NASDAQ: MU) | No (Korea only) |

| CHIPS Act exposure | None | $6B+ in grants | None |

| Key risk | Nvidia customer concentration | Capex overrun if cycle turns | Conglomerate structure; HBM execution lag |

6 - What's already priced in

When I first looked at this sector from a distance and saw SK Hynix up 475%, Micron up 500%, and Samsung up 250%, my initial reaction was clear: I missed the boat.

But after really diving into it, I think both SK Hynix and Micron in particular still have a meaningful way to go. While I believe the real easy money has been made, I see significant upside from today's price points for both companies as the supply demand imbalance persists into 2027 and potentially 2028.

For context, both SK Hynix and Micron trade at a 40% discount on an EV/EBITDA basis compared to TSMC. That's not an apples to apples comparison, but it shows they aren't trading at lofty valuations either. Their stock prices simply followed a major uptick in revenue, margins, and net income.

With HBM growing at roughly 40% per year, with each GPU generation demanding more memory than the last, with supply shortages likely running through at least 2027, and with new generation HBM commanding meaningfully higher pricing than the one before it, I don't think the earnings base of these companies has peaked. They're in the middle of the ramp.

An important risk worth watching: Rubin Ultra ships in H2 2027, Feynman arrives in 2028. Between now and then, if hyperscaler capex gets cut or Nvidia misses a platform transition, the memory ramp takes a direct hit.

A material reduction in infrastructure spending guidance from Microsoft, Google, Amazon, or Meta would reprice the entire memory segment quickly. The capital flow throughout the AI supply chain starts with the hyperscalers, and when that capital starts to dry up, every business further down the chain, including memory, gets impacted materially.

7 - What I'm planning to do

I like to own market leaders because winners tend to keep winning. With that in mind, I'm planning to initiate a position in SK Hynix soon, while also keeping a close eye on Micron. I'll start with a small allocation around 2% and scale from there. It's a volatile stock, so I'm not in a rush and will use that volatility to build my position over time.

SK Hynix is my preferred pick because of several advantages:

- Technology lead with MR-MUF and the highest HBM yields in the industry

- Pure play into memory with strong management focus

- Market leadership in HBM at ~62% share

- Deepest engineering integration with Nvidia

- Potential ADR listing increasing accessibility for U.S. investors

- Slight valuation discount versus Micron

This probably won't become a true long term position in the way I usually think about holdings. The boom and bust nature of the memory business, even in a structurally improved version, makes me cautious about treating it like a compounder.

I'll closely watch hyperscaler demand and how it trickles through the AI supply chain. If I see signs of a slowdown or an inflection in the demand supply environment, I'll likely reduce or exit before the cycle turns.

Thank you for reading! I hope you found this deep dive insightful.

As always, this is not financial advice. Always do your own research before making any investment decision.

And receive the latest visuals and articles straight to your inbox

Member discussion